Possible futures

This is the final part of a series of introductory posts about the principles of climate modelling. Others in the series: 1 | 2 | 3 | 4

The final big question in this series is:

How do we predict our future?

Everything I’ve discussed so far has been about how to describe the earth system with a computer model. How about us? We affect the earth. How do we predict what our political decisions will be? How much energy we’ll use, from which sources? How we’ll use the land? What new technologies will emerge?

We can’t, of course.

Instead we make climate predictions for a set of different possible futures. Different storylines we might face.

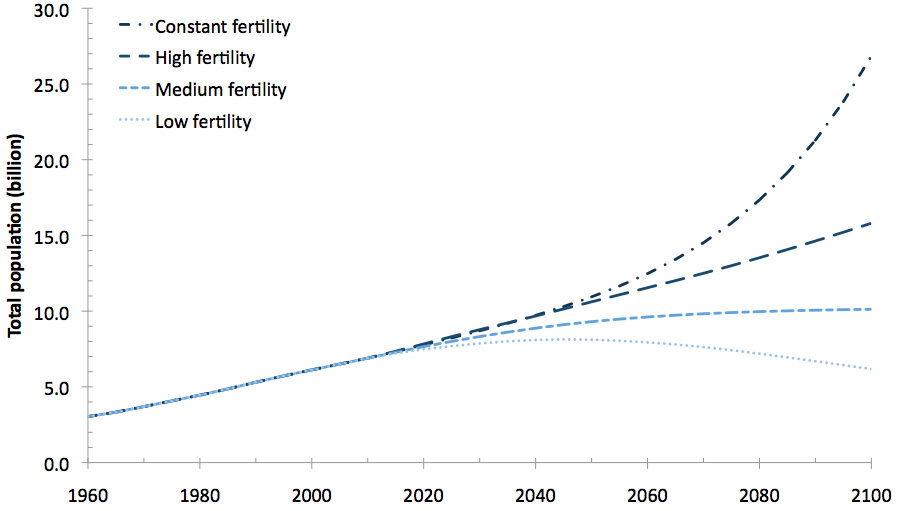

Here is a graph of past world population, and the United Nations predictions for the future using four possible fertility rates.

The United Nations aren’t trying to answer the question, “What will the world population be in the future?”. That depends on future fertility rates, which are impossible to predict. Instead they ask “What would the population be if fertility rates were to stay the same? Or decrease a little, or a lot?”

We do the same for climate change: not “How will the climate change?” but “How would the climate change if greenhouse gas emissions were to keep increasing in the same way? Or decrease a little, or a lot?” We make predictions for different scenarios.

Here is a set of scenarios for carbon dioxide (CO2) emissions. It also shows past emissions at the lefthand side.

These scenarios are named “SRES” after the Special Report on Emissions Scenarios in 2000 that defined the stories behind them. For example, the A1 storyline is

“rapid and successful economic development, in which regional average income per capita converge – current distinctions between “poor” and “rich” countries eventually dissolve”,

and within it the A1F1 A1FI scenario is the most fossil-fuel intensive. The B1 storyline has

“a high level of environmental and social consciousness combined with a globally coherent approach to a more sustainable development”,

though it doesn’t include specific political action to reduce human-caused climate change. The scenarios describe CO2 and other greenhouse gases, and other industrial emissions (such as sulphur dioxide) that affect climate.

We make climate change predictions for each scenario; endings to each story. Here are the predictions of temperature.

Each broad line is an estimate of a conditional probability: the probability of a temperature increase, given a particular scenario of emissions.

Often this kind of prediction is called a projection to mean it is a “what would happen if” not a “what will happen”. But people do use the two interchangeably, and trying to explain the difference is what got me called disingenuous and Clintonesque.

We make projections to help distinguish the effects of our possible choices. There is uncertainty about these effects, shown by the width of each line, so the projections can overlap. For example, the highest temperatures for the B1 storyline (environmental, sustainable) are not too different from the lowest temperatures for A1F1 A1FI (rapid development, fossil intensive). Our twin aims are to try to account for every uncertainty, and to try to reduce them, to make these projections the most reliable and useful they can be.

A brief aside: the approach to ‘possible futures’ is now changing. It’s a long and slightly tortuous chain to go from storyline to scenario, then to industrial emissions (the rate we put gases into the atmosphere), and on to atmospheric concentrations (the amount that stays in the atmosphere without being, for example, absorbed by the oceans or used by plants). So there has been a move to skip straight to the last step. SRES are being replaced with Representative Concentration Pathways (“RCP”).

The physicist Niels Bohr helpfully pointed out that

“Prediction is very difficult, especially if it’s about the future.”

And the ever-wise Douglas Adams added

“Trying to predict the future is a mug’s game.”

They’re right. Making predictions about what will happen to our planet is impossible. Making projections about what might happen, if we take different actions, is difficult, for all the reasons I’ve discussed in this series of posts. But I hope, as I said in the first, I’ve convinced you it is not an entirely crazy idea.